Alan M. Davis: 201 Principles of Software Development

Table of Contents

Murphy's Law (ML)

Variants and Alternative Names

- Design for Errors1)

Context

Principle Statement

Whatever can go wrong, will go wrong. So a solution is better the less possibilities there are for something to go wrong.

Description

Although often cited like that, Murphy's Law actually is not a fatalistic comment stating “that life is unfair”. Rather it is (or at least can be seen as) engineering advice to design everything in a way that avoids wrong usage. This applies to everything that is engineered in some way and in particular also to all kinds of modules, (user) interfaces and systems.

Ideally, incorrect usage is strictly impossible. For example, this is the case when the compiler will stop with an error if a certain mistake is made. And in the case of user interface design, a design is better when the user cannot make incorrect inputs as the given controls won't let him.

It is not always possible to design a system in such a way. But as systems are built and used by humans, one should strive for such “fool-proof” designs.

There are different kinds of possible errors that can and according to ML eventually will occur in some way: Replicated data can get out of sync, invariants can be broken, preconditions can be violated, interfaces can be misunderstood, parameters can be given in the wrong order, typos can occur, values can be mixed up, etc.

Note that Murphy's law also applies to every chunk of code. According to the law the programmer will make mistakes while implementing the system. So it is better to implement a simple design, as this will have fewer possibilities to make implementation mistakes. Furthermore code is maintained. Bugfixes will be necessary, present functionality will be changed and enhanced, so every piece of code will potentially be touched in the future. So a design is better the fewer possibilities there are to introduce faults while doing maintenance work.

Rationale

Systems are built and used by humans. And as humans always will make mistakes, there always will be some possibilities for a certain mistake. So if some mistake is possible, eventually there will be someone who makes this mistake. This applies likewise to system design, implementation, verification, maintenance and use as all these tasks are (partly) carried out by humans.

This means the fewer possibilities there are that a mistake is made, the fewer there will be. As mistakes are generally undesirable, a design is better when there are fewer possibilities for something to go wrong.

Note that ML does not claim that everything constantly fails unless there is no possibility to do so. It simply says that statistically in the long run a system will fail if it can.

Strategies

This is a very general principle so there is a large variety of possible strategies to adhere more to this principle largely depending on the given design problem:

- Make use of static typing, so the compiler will report faults

- Make the design simple, so there will be fewer implementation defects (see KISS)

- Use automatic testing to find defects

- Avoid duplication and manual tasks, so necessary changes are not forgotten (see DRY)

- Use polymorphism instead of repeated switch statements

- Use the same mechanisms wherever reasonably possible (see UP)

- Use consistent naming and models throughout the design (see MP)

- Avoid Preconditions and Invariants (see IAP)

- Use assertions to detect problems early.

- …

Caveats

See section contrary principles.

Origin

The exact wording and who exactly coined the term, remains unknown. Nevertheless, it can be stated that its origin is an experiment with a rocket sled conducted by Edward A. Murphy and John Paul Stapp. During this experiment, some sensors had been wired incorrectly. A more accurate quote might read something like this: “If there's more than one possible outcome of a job or task, and one of those outcomes will result in disaster or an undesirable consequence, then somebody will do it that way.” A more detailed version of the history of the experiment and the law can be found in 2) and Wikipedia.

Evidence

- Accepted The principle is widely known and its validity is assumed. Nevertheless sometimes it is rather used as a kind of joke instead of as design advice. See for example Jargon File: Murphy's Law

Furthermore every defect in any system is a manifestation of ML. If there is a fault then obviously something went wrong. The correlation between the number of possibilities for introducing defects and the actual defect count can be regarded trivially intuitive.

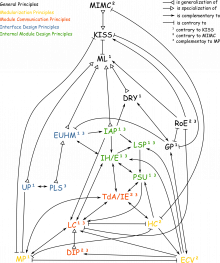

Relations to Other Principles

Generalizations

Specializations

- Don't Repeat Yourself (DRY): Duplication is a typical example of error possibilities. In case of a change, all instances of a duplicated piece of information have to be changed accordingly. So there is always the possibility to forget to change one of the duplicates. DRY is the application of ML to duplication.

- Easy to Use and Hard to Misuse (EUHM): Because of ML an interface should be crafted so it is easy to use and hard to misuse. EUHM is the application of ML to interfaces.

- Uniformity Principle (UP): A typical source of mistakes are differences. If similar things work similarly, they are more understandable. But if there are subtle differences in how things work, it is likely that someone will make the mistake to mix this up.

- Invariant Avoidance Principle (IAP): Invariants are statements that have to be true in order to keep a module in a consistent state. ML states that eventually an invariant will be broken resulting in a hard to detect defect. IAP states that invariants should therefore be avoided. So IAP is the application of ML to invariants.

Contrary Principles

- Keep It Simple Stupid (KISS): On the one hand a simpler design is less prone to implementation errors. In this aspect, KISS is similar to ML. On the other hand, it is sometimes more complicated to make a design “fool-proof” so usage and maintenance mistakes are prevented. In this aspect KISS is rather a contrary principle. Both apply at the same time so a tradeoff has to be made whether correct implementation or correct usage and maintenance are more important in the given case. This means, it is necessary to consider KISS in addition to ML in order to find a suitable compromise. See example 1: parameters.

Complementary Principles

- Fail Fast (FF): Sometimes it is impossible to actually prevent an error. In such a case it is advisable to fail fast so the error is recognized early.

Principle Collections

Examples

Example 1: Parameters

Suppose there are two methods of a string class replaceFirst() and replaceAll() which replace the first or all occurrences of a certain substring, respectively.

The following method signatures are a bad choice:

replaceFirst(String pattern, String replacement) replaceAll(String replacement, String pattern)

Eventually someone will mix up the order of the parameters leading to a fault in the software which is not detectable by the compiler.

So it is better to make parameter lists consistent:

replaceFirst(String pattern, String replacement) replaceAll(String pattern, String replacement)

This is less error prone. When for example a call to replaceFirst() is replaced by a call to replaceAll(), one cannot forget to exchange the parameters anymore. This is how it is done in the Java API.

But here still one could mix up the two string parameters. Although this is less likely, as having the substring to look for first is “natural”, such a mistake is still possible. An alternative would be the following:

replaceFirst(Pattern pattern, String replacement) replaceAll(Pattern pattern, String replacement)

Here both methods expect a Pattern object instead of a regular expression expressed in a string. Mixing up the parameters is impossible in this case as the compiler would report that error. On the other hand using these methods becomes a bit more complicated:

"This are a test.".replaceFirst(new Pattern("are"), "is");

3) instead of

"This are a test.".replaceFirst("are", "is");

The KISS-Principle is about this disadvantage.

Example 2: Casts and Generics

Another example for the application of Murphy's Law would be the avoidance of typecasts:

List l = new ArrayList(); l.add(5); return (Integer)l.get(0) * 3;

This works but it makes a cast necessary and every cast circumvents type checking by the compiler. This means it is theoretically possible that during maintenance someone will make a mistake and store a value other than Integer in the list:

l.add("7");

Murphy's Law claims that however unlikely such a mistake might seem, eventually someone will make it. So it is better to avoid it. In this case this could be done using Generics:

List<Integer> l = new ArrayList<Integer>(); l.add(5); return l.get(0) * 3;

Here this mistake is impossible as the compiler only allows storing integers.

Note that the typecast is rather a symptom than the actual problem here. The problem is, that the List interface is not generic and the symptom is the typecast. The reason for this flaw is, that the List interface predates the introduction of generics in Java.

Example 3: Date, Mutability/Aliasing

In Java the classes ''Date'' as well as the newer ''Calendar'' are mutable which means the reference semantics of Java objects may cause unintended alternations of date values. Eventually someone will copy the reference to a date object instead of copying the object itself, which is usually a mistake when programming with dates.

Date date1 = new Date(2013, 01, 12); Date date2 = date1; System.out.println(date1); // Sun Feb 12 00:00:00 CET 3913 System.out.println(date2); // Sun Feb 12 00:00:00 CET 3913 date1.setMonth(2); System.out.println(date1); // Sun Mar 12 00:00:00 CET 3913 System.out.println(date2); // Sun Mar 12 00:00:00 CET 3913

Furthermore as can be seen in the code above, the month value counterintuitively is zero-based, which results in 1 meaning February. This obviously is another source for mistakes. Also the order of the parameters can be mixed up easily. And lastly this does not refer to a date in 2013 but to one in 3913! The year value is meant to be “two-digit”, so 1900 is added to it. So there are plenty of possibilities for making mistakes. And sooner or later someone will make them.

Because of these and several other flaws in the design of the Java date API, most of the methods in Date are deprecated and also the newer Calendar API will be replaced by a new API in Java 8.

Description Status

Further Reading

Discussion

Discuss this wiki article and the principle on the corresponding talk page.

Pattern.compile() instead of new Pattern(); see Java API: Pattern